Levmar Excel is a wrapper around levmar, one of the best Levenberg-Marquardt algorithms out there. The wrapper allows you to use levmar in Excel via VBA. This is handy if you want to solve a nonlinear least squares problem. Alternatively you could also try to use the build-in Excel solver. While this solver is excellent, it isn’t too easy to integrate in VBA code. Levmar Excel fills the gap. It gives you an easy to integrate least squares solver for VBA code. In the remainder of the post it will be explained how to set things up to get the demo spreadsheet working. (If you are a developer wanting more details on the source code of this project look here.) Continue reading

Author: Jorrit de Jong

Generalized Procedure for Building Short Rate Trees in Excel / VBA

In their 2014 paper John Hull and Alan White derive generalized method for the construction of short rate trees. This generalization is interesting as it allows for one tree (or lattice) construction algorithm for all one factor short rate models. The only difference between the various models is the function  , which is explained briefly here and in detail in the paper. Continue reading

, which is explained briefly here and in detail in the paper. Continue reading

Compiling LevmarSharp (Visual Studio 2010)

Prerequisites:

-Visual Studio 2010

-levmar 2.6 (http://users.ics.forth.gr/~lourakis/levmar/)

-levmarsharp (https://github.com/AvengerDr/LevmarSharp)

For a recent research project we needed to solve an optimization problem. In specific we were trying to reproduce the results in the paper “A Generalized Procedure for Building Trees for the Short Rate and its Application to Determining Market Implied Volatility Functions” by Hull and White. In the paper it is described how a lattice can be constructed and calibrated to market. The calibration is essentially an optimization problem where the difference between the discount factors (or interest rates) observed in the market and the discounts generated in the model is made as small as possible by varying the model parameters.

Compiling Levmar using NMake (Visual Studio 2010)

Prerequisites:

-Visual Studio 2010 which comes with NMake

-levmar 2.6 (http://users.ics.forth.gr/~lourakis/levmar/)

For a recent research project we needed to solve an optimization problem. We considered using levmar by Lourakis. Not having touched C or build code using Make for a while it took a little while to get everything setup and building. In this blog the steps needed will be described. Should you run into trouble please consider the troubleshooting section at the end of this post. If you are interested in using levmar in C# check out this blog post.

Reproduction Example 1 of Generalized Procedure for Building Trees

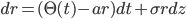

In a recent (2014) paper John Hull and Alan White demonstrate a generalized method for the construction of short rate trees. Keen to understand the model we tried to reproduce the results of the first example mentioned in the paper on page 10. The example considers the short rate model:

which is transformed using

The Geometry of Interest Rate Risk

In this paper we consider the process of interest rate risk management. The yield curve construction is revisited and emphasis is given to aspects such as input instruments, bootstrap and interpolation. For various financial products we present new formulas that are crucial to define sensitivities to changes in the instruments and/or in the curve rates. Such sensitivities are exploited for hedging purposes. We construct the risk space, which eventually turns out to be a curve property, and show how to hedge any product or any portfolio of products in terms of the original curve instruments.

Keywords: Yield curve, hedging, interest rate risk management.

Click here for the full paper.

Validation Hour Estimation of Project Plan

Ugly Duckling was asked to give a second opinion on the hour estimation of a large IT implementation project of a financial solution developed by team outside the IT landscape. We started by creating a large detailed list of each activity and sub-activity expected to be carried out during the project. The list contained an optimistic as well as a pessimistic estimate.

The optimistic approach consider that all the (sub-)activities are carried out smoothly with no unexpected delays and/or additional exceptional activities. In the pessimistic approach things go wrong and/or unexpected activities arise, i.e. project risks materialise. The pessimistic approach estimates the extra time to deal with these risks.

Based on the list it was easy for management to assess whether estimations were within realistic bounds and where further investigation was needed.

Note Why Multi Curve is Essential

The need for Multi Curve is becoming more clear in the marketplace. As a result many banks are moving from Single Curve valuation to Multi Curve, or have already done so. For a client we wrote a short paper list some of the pro’s and con’s of implementing Multi Curve. This paper was meant to trigger the discussion in the team and beyond.

Expert Meetings Residential Mortgage Prepayment Model

We helped a Dutch bank establish a vision on the prepayments level(s) of their residential mortgage portfolio by hosting a series of expert meetings. The goal of the sessions was to establish the prepayment level the bank expects in the near future, that is within a horizon of 10 years. To this end the total prepayment level is split in 3 subparts: refinance, curtailments

and relocation. We prepared a list of key factors driving these subparts, which were refined during the first session. For the consecutive session we provide more data and give insights into the impact of the choices of the experts. The final output of the work was a new set of model parameters to use for the banks prepayment model along with the impact on the balance sheet of the update in parameters.

Penalty Model Residential Mortgages

We derived a model to compute the penalty of Residential Mortgage in the case clients prepay their mortgage. The penalty conditions are described in the terms and conditions posted by the bank. We used these terms and conditions to derive a theoretical penalty. This approach allowed us to make a model, which doesn’t depend on statistical analysis.